Table of Contents

- Hook: One Search. One Click. One Second. And Your Account Is Suddenly Flagged.

- The Internet Is Not the Wild West Anymore — And Search Isn’t “Free” Like It Used to Be

- What Are “Banned X-Rated Search Terms” and How Do Category Bans Work?

- The Hidden System Behind Your Searches: Algorithms, Safety Layers, and Red Lines

- Why Tech Platforms Are Doing This: Safety, Liability, and… Money

- The Debate: Is This Protection… or Quiet Digital Censorship?

- Category Bans Don’t Just Affect Your Content—They Can Shape Your Digital Identity

- What This Means for Parents, Teens, and Everyday Users

- Your Data, Your Risk: How Banned Searches Tie into Digital Footprints

- If This Happened to You, How Would You React?

- So What Can You Actually Do to Protect Yourself?

- The Bigger Question: Who Should Control What We’re Allowed to Search?

- Final Thought: The Internet Remembers What You Type—Even When You Forget

Hook: One Search. One Click. One Second. And Your Account Is Suddenly Flagged.

You’re scrolling late at night.

Curious, bored, or just wandering the internet rabbit hole.

You type a word into a search bar—maybe as a joke, maybe by accident, maybe out of curiosity.

You hit enter.

And in the background, completely invisible to you, something happens:

Your account gets quietly flagged.

Your activity is logged.

Your future recommendations change.

On some platforms, you might even be instantly blocked from viewing certain content categories, or worse—locked out of key features.

Why?

Because more and more major platforms are creating lists of banned X-rated search terms that trigger automatic restrictions, content filters, and sometimes account category bans.

And most users have no idea this is happening.

The internet is changing.

Search is no longer neutral.

And the words you type are now part of a much bigger decision about what you’re allowed to see—and who you’re allowed to be—online.

The Internet Is Not the Wild West Anymore — And Search Isn’t “Free” Like It Used to Be

For years, the internet felt limitless.

If you could think it, you could search it.

If you could search it, you could probably find it.

But the last decade has changed everything.

Tech companies now face pressure from:

- governments

- advertisers

- parents

- regulators

- mental health experts

…to clean up their platforms.

It’s not just about removing illegal content.

It’s about what kind of digital environment these platforms want to be.

So they’ve started with a simple but powerful weapon:

Search filters.

Instead of waiting to delete content after it appears, they’re cutting things off at the root:

If you can’t search for it, you can’t find it.

If you can’t find it, you can’t interact with it.

If you can’t interact with it, it can’t spread.

At least, that’s the theory.

What Are “Banned X-Rated Search Terms” and How Do Category Bans Work?

Let’s be clear:

We’re not talking about innocent words, romantic conversations, or general adult topics.

We’re talking about:

- explicit sexual keywords

- exploitative or abusive themes

- content linked to illegal activities

- search phrases commonly used to find hardcore adult material, sometimes involving harm

These terms are so tied to adult or dangerous content that platforms don’t just want to hide them—they want to erase them from the user experience.

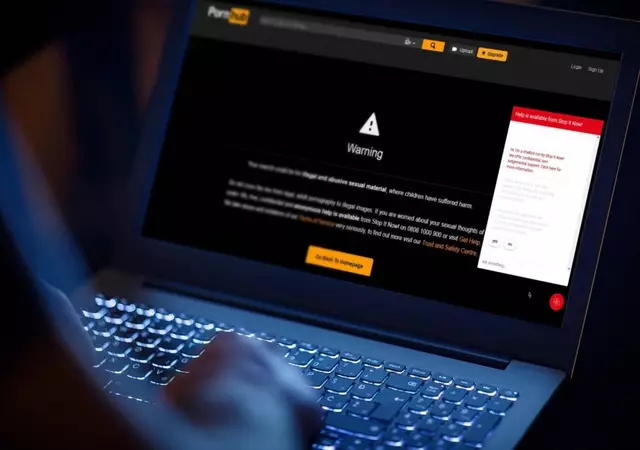

Here’s what often happens when someone types one in:

- The search is blocked (no results or a generic warning message).

- The account gets categorized into a restricted profile (for content, ads, or recommendations).

- In some cases, repeated searches may trigger temporary or permanent bans from specific features, categories, or even entire platforms.

This is what’s known as a category ban.

You might not be kicked off the site completely…

But you might be:

- banned from viewing live content

- blocked from commenting

- unable to see certain tags, creators, or videos

- cut off from search entirely in specific topics

And it can all start with a single search.

The Hidden System Behind Your Searches: Algorithms, Safety Layers, and Red Lines

Every time you search something online, the platform isn’t just looking for results.

It’s also:

- analyzing your intent

- filtering what it considers unsafe

- updating your behavioral profile

- connecting your search history to advertising categories

- ranking your “trustworthiness” as a user

Now add banned terms into the mix.

Many platforms now maintain internal lists that are:

- not publicly disclosed

- constantly updated

- influenced by legal guidelines

- affected by advertiser preferences

- adjusted according to age, region, and device

When those words are detected:

- a safety system kicks in

- moderators may be alerted in extreme cases

- your account may be auto-labeled as “adult interest,” “restricted,” or “high-risk category”

And here’s the important part:

You might never be explicitly told.

You just start noticing things like:

- “This feature is unavailable.”

- “You are not allowed to view this content.”

- “Your account does not qualify for this section.”

It feels random.

But it’s not random at all.

Why Tech Platforms Are Doing This: Safety, Liability, and… Money

Why go so hard on X-rated search terms now?

Three big reasons:

1. Legal and Regulatory Pressure

Governments are tightening laws around:

- online exploitation

- harmful content

- underage users accessing adult material

- platforms ignoring reports of illegal content

Companies don’t want billion-dollar fines or public scandal.

So they overcorrect.

If in doubt, block it.

2. Brand and Advertising Safety

Advertisers pay the bills.

And advertisers do not want their finance, health, travel, or home improvement ads showing up:

- next to explicit searches

- in adult-only categories

- on user accounts flagged for “unsafe” behavior

So platforms build ad-safe environments.

That means:

- banning certain words

- isolating risky users into “unmonetizable” zones

- making sure mainstream-paying topics (like finance, health, and travel) stay “clean”

From a business perspective, your search history doesn’t just affect what you see…

It affects how valuable you are as a user.

3. User Safety, Especially for Minors

There’s one thing platforms fear more than bad press:

being blamed for harm to children.

So if there’s even a chance that younger users see sexual content because of search, platforms step in hard.

Search is now heavily:

- age-gated

- monitored

- filtered

- censored

Some would say: finally.

Others would say: dangerously overreaching.

Which brings us to the next part.

The Debate: Is This Protection… or Quiet Digital Censorship?

On one side, people say:

“Good. The internet shouldn’t be a free-for-all. Platforms should block harmful content.”

On the other, critics argue:

“Where does it stop? Who gets to decide what’s ‘too explicit’ or ‘too adult’? How much power do companies have over what we’re allowed to search?”

This isn’t just about erotic content.

It’s about:

- control

- power

- freedom of access

- the right to information

Because once companies start blocking one category of terms, they have the infrastructure to block others.

Today: X-rated searches.

Tomorrow: controversial politics?

Sensitive health topics?

Certain communities or identities?

That slippery slope is what worries digital rights advocates the most.

Category Bans Don’t Just Affect Your Content—They Can Shape Your Digital Identity

Here’s something most people never think about:

When your searches place you in a certain “category,” it doesn’t just affect what you can’t see.

It changes what you do see.

Your feed, your recommendations, and your suggested content all become shaped by what the system believes about you.

That means:

- fewer educational resources

- fewer mainstream suggestions

- more extreme, niche, or isolated content

- more aggressive filtering of your outgoing posts or comments

Over time, this can:

- distort your sense of “normal”

- reinforce certain patterns

- cut you off from broader conversations

All because of a handful of search terms typed months ago.

What This Means for Parents, Teens, and Everyday Users

This kind of system has very real consequences for different people.

For Parents

It’s a wake-up call that parental control isn’t just about installing apps—it’s about understanding:

- what platforms allow

- how search filters work

- what your kids might stumble into

- how to talk about online curiosity instead of just forbidding it

For Teens and Young Adults

It means:

- your curious searches can have long-term account consequences

- some platforms may restrict your visibility based on past behavior

- you could be blocked from certain communities or features without realizing why

For Women and Marginalized Users

Sometimes, educational or advocacy content around bodies, sexuality, or identity can be incorrectly swept into “X-rated” flags.

This makes it harder to access:

- sexual health education

- body positivity content

- trauma recovery resources

- relationship counseling

Safety systems are rarely perfect.

And sometimes, the people most in need of safe, accurate information get caught in the crossfire.

Your Data, Your Risk: How Banned Searches Tie into Digital Footprints

Let’s add another layer:

digital identity and long-term data trails.

Your search terms don’t just affect your time on one platform.

They can:

- be stored long-term

- influence ad profiles

- be shared with partnered services

- potentially resurface during investigations

If platforms consider certain searches high-risk or illegal, they may:

- log it more aggressively

- connect it to IP addresses and devices

- include it in trust & safety risk profiles

This matters not just for explicit topics, but for:

- future background checks

- access to certain jobs

- visa or travel screenings (in extreme cases where law enforcement is involved)

The bottom line:

Your search bar is not private.

It’s a record.

If This Happened to You, How Would You React?

Imagine you get this notification:

“You are no longer able to access this category due to previous search activity that violated our community guidelines.”

If this happened to you:

- Would you feel angry?

- Would you feel ashamed?

- Would you feel unfairly judged?

- Would you accept it and move on?

- Or would you fight back and demand transparency?

This is the emotional side of invisible moderation.

People are not automatically “bad” because they typed in a word.

Human curiosity is messy.

Behavior is complicated.

Intent matters.

But algorithms don’t understand embarrassment, experimentation, or bad timing.

They only understand patterns.

So What Can You Actually Do to Protect Yourself?

You don’t need to live in fear of your search bar.

But you can be smarter about how you use it.

✅ 1. Assume every search is logged.

If you wouldn’t want it associated with your name in a database, think twice.

✅ 2. Use privacy tools where appropriate.

Incognito mode doesn’t erase everything, but it prevents local history.

Privacy-focused search engines can reduce tracking.

✅ 3. Separate curiosity from identity.

Don’t build your main account around risky behavior.

If you must explore adult topics, know the platform’s policies first.

✅ 4. If you’re a parent—talk, don’t just block.

Kids will find ways around filters. Open conversations are much safer than total digital silence.

✅ 5. Learn the platform’s rules.

Most companies publish community guidelines, but few people read them.

Knowing what’s banned gives you power.

The Bigger Question: Who Should Control What We’re Allowed to Search?

At the heart of all this is a philosophical question:

Should corporations have the power to decide which words are too dangerous to even type?

Some argue yes:

- to protect children

- to prevent harm

- to reduce exploitation

Others argue no:

- because it concentrates too much power

- because it can be abused

- because it limits individual freedom

Reality probably lies somewhere in the middle.

Safety matters.

Freedom matters.

The challenge is building a digital world where both can exist without letting either one be destroyed by the other.

Final Thought: The Internet Remembers What You Type—Even When You Forget

You will forget many of your late-night searches.

The internet won’t.

The words you type shape:

- the content you see

- the ads you’re targeted with

- the categories you’re dropped into

- the digital doors that quietly open—or close—to you

So the next time your fingers hover over the keyboard and you’re about to type something extreme, explicit, or risky…

Ask yourself:

If this search cost me a feature, a community, or a platform—would it still be worth it?

Because these days, one search isn’t just a moment.

It’s a signal.

And platforms are listening more closely than ever.