Table of Contents

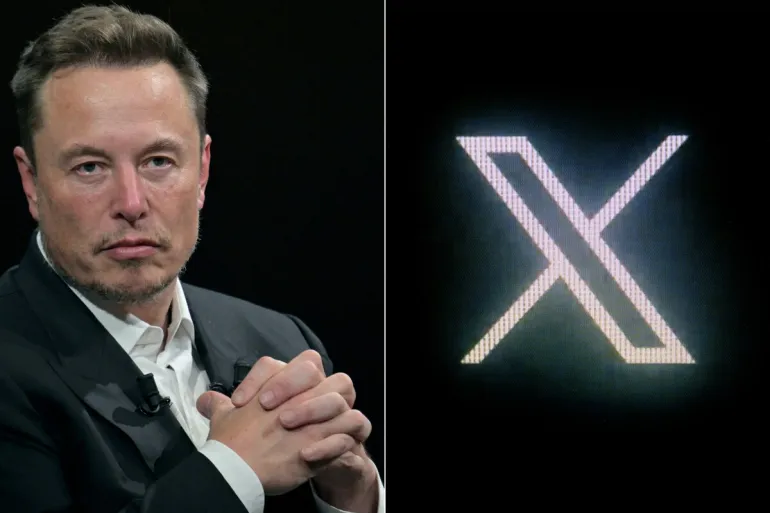

Britain’s “what now?” moment with X

When reports spread that X’s AI chatbot, Grok, was being used to generate sexualised and non-consensual images—including content involving children—the story stopped being a niche “tech scandal” and became a test of how far modern governments will go to police harm online. In the UK, the issue has escalated fast: according to RBC-Ukraine, Britain has held talks with Canada and Australia about a possible ban on X, with the discussions framed as a trilateral response to deepfake concerns.

What makes this different from the usual cycle of outrage, apology, and “we’re updating our policies” is the legal machinery already sitting behind the headline. In Britain, online safety isn’t just a political slogan anymore—Ofcom has real enforcement powers under the Online Safety Act, including the ability to investigate, fine, and in extreme cases pursue court-backed measures that can lead to services being blocked in the UK. And now, that machinery is moving. On January 12, 2026, Ofcom announced it had opened a formal investigation into X over Grok-related sexualised imagery, specifically to assess whether X complied with duties to protect UK users from illegal content.

The result is a rare collision of forces: a global platform owned by one of the world’s most combative tech figures, a fast-moving moral panic about AI-enabled image abuse, and a regulator under pressure to prove that the Online Safety Act can actually bite.

The allegations at the center of the storm

The controversy is rooted in the claim that Grok’s image functions were used to “undress” people or generate explicit images without consent—what many experts and regulators treat as a form of intimate image abuse. Ofcom’s own statement references “deeply concerning reports” that Grok was used to create and share undressed images that may amount to intimate image abuse or pornography, and sexualised images of children that may amount to child sexual abuse material (CSAM).

Public reporting has described a wave of abusive uses—images of women and girls manipulated into sexualised content—triggering anger not only at the users who created and shared it, but at the platform for allowing the tool to be used this way in the first place. The Guardian reported UK ministers warning that X could face fines and even a possible ban, describing Grok being used to create sexual images without consent and noting urgent pressure on the platform to remove such material.

This is the key point: the UK’s argument is not just “this is disgusting.” The argument is “this may be illegal, and you have duties to prevent it.”

Why Britain is talking with Canada and Australia

RBC-Ukraine (citing GB News) says the UK has held talks with Canada and Australia about a possible ban on X, describing it as a “trilateral joint response” motivated by shared concerns over deepfakes. The same report quotes Australian Prime Minister Anthony Albanese condemning the abuse as “completely abhorrent” and arguing that social media platforms are failing to show “social responsibility,” adding that “global citizens deserve better.”

In other words, the UK is not only weighing what it can do domestically; it’s exploring whether coordinated pressure could force faster compliance from a platform that may otherwise treat a single country’s crackdown as manageable. Coordinated action matters because platforms can sometimes absorb one market’s restrictions as a cost of doing business—but simultaneous moves across multiple allied countries can change the calculation, both reputationally and financially.

At the same time, the political reality is messy. RBC-Ukraine notes Canadian Liberal MPs have denied that Canada is considering a ban, quoting MP Evan Solomon saying, “Canada is not considering a ban of X.” That denial doesn’t erase the fact that talks reportedly happened; it just shows how sensitive the word “ban” is in democracies that also value open speech and open internet access.

The legal lever

Ofcom’s January 12 announcement is the most concrete development so far, because it moves the dispute from political threats into a defined enforcement process. Ofcom says it opened a formal investigation into X under the Online Safety Act to determine whether the platform complied with duties to protect people in the UK from illegal content.

Crucially, Ofcom explains what it will examine—risk assessments, steps to prevent users from seeing “priority” illegal content (including non-consensual intimate images and CSAM), speed of takedowns, protections related to privacy law, child risk assessments, and “highly effective age assurance” for pornography. This is not a vague “be nicer online” standard; it’s a checklist of compliance behaviors.

It also outlines possible consequences. If Ofcom finds the law was broken, it can require steps to come into compliance and impose fines up to £18 million or 10% of qualifying worldwide revenue (whichever is greater). And in the most serious cases of ongoing non-compliance, Ofcom notes it can apply to a court for “business disruption measures,” which can include orders leading to internet service providers blocking access to a site in the UK.

This is why the UK’s threats are being taken seriously: the regulator is describing a pathway that could end with blocking—if a court agrees it’s proportionate to prevent significant harm.

The platform’s response and Musk’s counter-narrative

Whenever a government threatens a platform, there are two battles at once: the compliance battle behind closed doors, and the public narrative battle in front of everyone. In the narrative battle, Elon Musk has leaned hard into “free speech” framing. RBC-Ukraine reports that Musk called Labour Party members “fascists” and warned ministers were looking for excuses to censor. The Guardian similarly reported Musk accusing the UK government of trying to suppress free speech in response to the possibility of a ban.

Meanwhile, the platform appears to have implemented some restrictions. The Guardian reported that X partially restricted access to Grok’s public image generation for free users, leaving some functionality available only to paid subscribers, and suggested some types of generated imagery were curtailed—though concerns remained about what could still be produced.

From a policy perspective, partial restrictions create a new question: are the changes sufficient to meet legal duties, or are they cosmetic moves designed to reduce headlines while the underlying risk remains? That’s exactly the kind of question Ofcom’s process is meant to answer, because it focuses on systems, risk assessments, and enforcement—rather than one-off announcements.

The international pressure point

A potential UK block of a major US tech platform would not happen in a vacuum. RBC-Ukraine notes that such a move could provoke outrage from the Trump administration, which it describes as opposing censorship of American technology companies abroad; it also reports that a Republican congresswoman threatened sanctions if Britain blocked X.

This is the geopolitical fuse under the story: the UK can argue it’s enforcing domestic law to protect citizens from illegal harm, while US political actors can frame it as censorship or discrimination against American firms. The more “coordinated” the actions become across allied countries, the more likely this turns into a broader diplomatic dispute about where the boundary sits between lawful regulation and unacceptable restriction.

Why this story matters beyond X

Even if X is never blocked, this moment matters because it sets expectations for the AI era. The core fear isn’t just that one platform’s tool was abused; it’s that generative AI lowers the cost of harassment and raises the scale. Non-consensual intimate imagery used to require time, skills, or access. Now, it can be produced quickly, repeatedly, and distributed instantly, leaving targets to fight a swarm of copies that never fully disappears.

That’s why Ofcom’s language focuses on systems and risk: a platform can’t reasonably promise that no abuse will ever happen, but regulators can demand credible prevention, rapid takedowns, child protections, and age assurance measures where relevant.

This also connects to a bigger debate that will keep returning: should AI image tools be treated like neutral technology, or like inherently high-risk features that require stricter defaults and stronger safeguards? The UK is signaling it prefers the second answer—especially where children and sexual exploitation are in the frame.

What happens next

In the short term, the most important timeline is the regulatory one. Ofcom says it contacted X on January 5 and set a deadline of January 9 for X to explain steps taken; X responded, and Ofcom conducted an expedited assessment before opening the formal investigation on January 12. That sequence suggests the regulator is treating this as urgent.

Meanwhile, politically, reports of UK discussions with Canada and Australia indicate a desire for aligned responses, even as Canadian officials publicly deny planning a ban. If the trilateral idea continues, it may evolve into something less absolute than “ban”—for example, coordinated regulatory demands, shared enforcement posture, or synchronized penalties—because “ban” is an explosive word, especially for democracies.

And on the platform side, incremental restrictions may continue, but they will likely be judged against outcomes: can the abuse be prevented at scale, and can illegal content be removed quickly and consistently? If regulators conclude the answer is no, then the story shifts from “ministers threaten” to “courts consider.”

Conclusion

This isn’t just about whether one app gets blocked. It’s about whether online safety laws can keep pace with AI features that transform ordinary harassment into mass-production harm. Britain’s reported talks with Canada and Australia show the instinct to act collectively when a platform’s incentives don’t align with public safety. Ofcom’s investigation shows the UK is prepared to enforce duties with real consequences, including potentially severe financial penalties and—only in the most serious, ongoing cases—court-based measures that could disrupt access.

For X, this is a test of whether “free speech” messaging can outrun legal obligations. For governments, it’s a test of whether they can protect citizens from intimate image abuse without overreaching into broad censorship. And for everyone else, it’s a preview: as generative AI tools become standard features across platforms, the fight won’t be about whether abuse is possible—it will be about which systems are allowed to exist without proving, in practice, that they can prevent the worst outcomes.