Table of Contents

- When Battlefield Technology Crosses a New Line

- What Foundation Sent to Ukraine

- Why Ukraine Has Become the Testing Ground

- The Moral Case Supporters Are Making

- The Limits of the Phantom MK-1

- Human Control Is Still the Official Red Line

- The Next Model Is Already Coming

- What This Could Mean for the Future of War

When Battlefield Technology Crosses a New Line

When news broke that a U.S. robotics startup had sent humanoid machines into Ukraine for battlefield testing, the story instantly stood out from the already fast moving world of wartime innovation. Drones have become common. Ground robots are increasingly familiar. But a human shaped robot stepping into a live war zone feels like something different, something that belongs as much to the future as to the present. Yet this is no longer science fiction. Foundation, a San Francisco based company, deployed two of its Phantom MK-1 humanoid robots to Ukraine in February 2026 for reconnaissance and field evaluation, in what appears to be the first known case of a humanoid robot being tested near the front lines of the Russia Ukraine war.

The deployment matters not only because of the machines themselves, but because of what they represent. The Ukraine war has already become a proving ground for drones, autonomous systems, and rapid defense tech iteration. Now it may also be the place where military planners, engineers, and policymakers begin confronting a new question: what happens when humanoid robots stop being prototypes in labs and start becoming tools in conflict zones. The answer is still unclear, but the test itself signals that the next stage of battlefield experimentation may already be underway.

What Foundation Sent to Ukraine

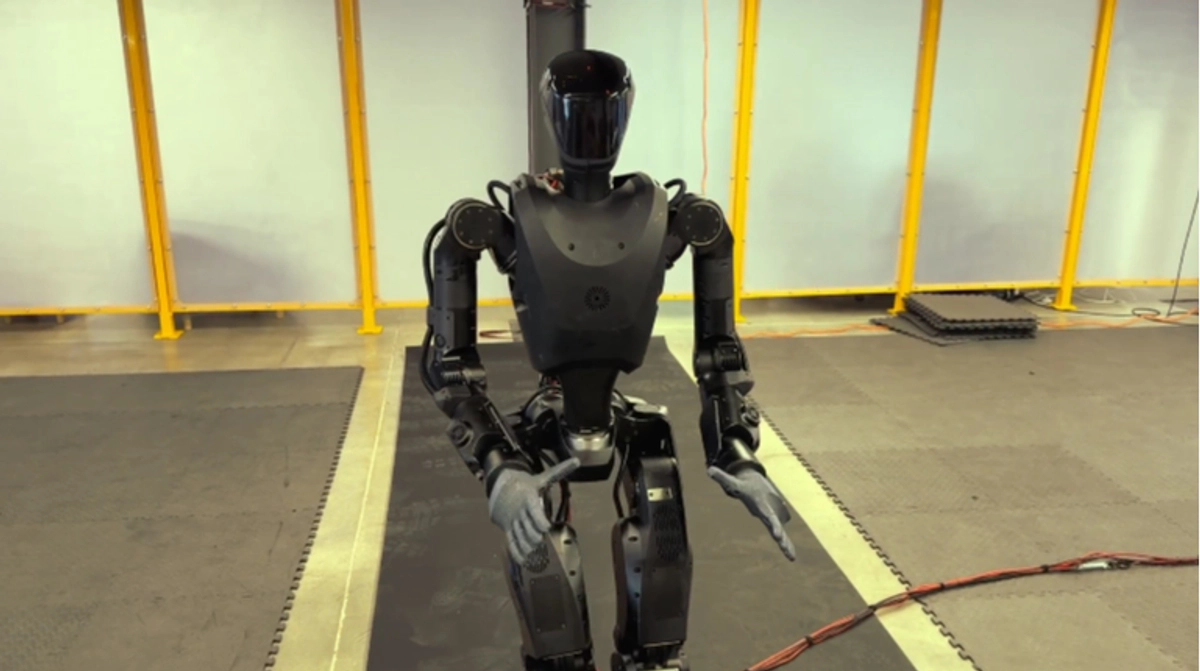

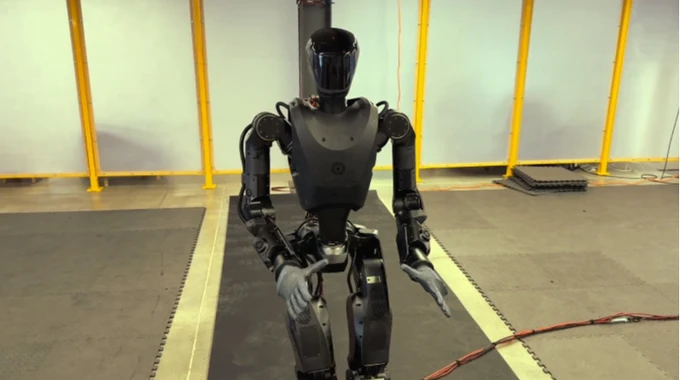

Foundation introduced the Phantom MK-1 in October 2025 as a humanoid platform designed specifically for military environments. According to reporting on the company and its deployment, the robot stands about 5 feet 9 inches tall and weighs roughly 175 to 180 pounds. It was built to operate in dangerous areas where commanders may prefer not to send human troops, especially for high risk reconnaissance and other exposed tasks.

The two units sent to Ukraine were not described as autonomous combat assassins or fully armed killer machines. Their role, at least for now, is much narrower. They were deployed to carry out reconnaissance duties and to allow engineers to assess how the platform behaves under real battlefield conditions. That distinction is important. Much of the current interest lies in testing durability, mobility, communications, and survivability under combat stress, rather than immediately handing lethal functions to a humanoid robot.

Still, the symbolism is powerful. A humanoid robot carries a different psychological and strategic weight from a wheeled supply machine or a small tracked unmanned vehicle. It invites visions of robotic infantry and autonomous soldiers, even if the present deployment is much more limited. That gap between what the technology is now and what people fear or imagine it could become is a major reason the story has captured so much attention.

Why Ukraine Has Become the Testing Ground

Ukraine did not reach this moment in isolation. Over the past several years, the country has rapidly expanded its use of robotic systems on the battlefield, especially as both sides searched for ways to reduce troop exposure in lethal terrain. According to operational data compiled through Ukraine’s Brave1 defense technology initiative and reported by United24, ground robotic systems were used in more than 7,000 operations in January 2026 alone. That number shows just how deeply robotic systems have already been woven into Ukrainian battlefield operations.

Most of those missions were practical rather than dramatic. Ground robots have been used to carry ammunition, food, weapons, and supplies to frontline positions. They have moved through dangerous zones where ordinary vehicles or exposed infantry would face high risk. Some have also been used to evacuate wounded soldiers, reducing the need for medics or fellow troops to enter active fire zones. Others have supported combat missions when fitted with remotely operated weapon systems.

That growth has been extraordinarily fast. Ukrainian reporting says the country had almost no domestic companies producing ground combat robots before Russia’s full scale invasion. Now more than 200 companies are manufacturing robotic platforms, and hundreds of designs have been evaluated through the Brave1 program. In other words, Ukraine is not just using robots. It is building an entire wartime ecosystem around them. In that context, a trial involving humanoid systems does not look like a wild outlier. It looks like the next logical experiment in an already mechanized battlefield.

The Moral Case Supporters Are Making

Foundation’s leadership has framed this development in ethical terms as much as technical ones. Mike LeBlanc, a combat veteran and co founder of the company, told Time that he sees a moral imperative in sending robots to war rather than soldiers. That statement is likely to strike many readers as startling, but it captures the company’s core argument. If machines can absorb the most dangerous frontline roles, then fewer humans may need to be placed in situations where death or catastrophic injury is likely.

This is one of the strongest arguments driving military robotics in general. Supporters say that if a robot can scout a hostile building, cross mined terrain, recover a wounded fighter, or carry supplies through artillery fire, then it can save human lives even without replacing human judgment. In that sense, the promise of humanoid robots is not primarily about making war more aggressive. It is about making some wartime functions less deadly for the humans currently required to do them.

Yet this moral case is only persuasive if the technology truly reduces risk rather than creating new dangers. A robot that breaks down, loses balance, gets captured, or misreads its environment could still put troops in jeopardy. So while the rhetoric of replacing soldiers with machines is compelling, it depends on field performance that remains unproven. The war zone test in Ukraine is partly an attempt to discover whether that promise can survive contact with reality.

The Limits of the Phantom MK-1

For all the excitement, the Phantom MK-1 remains a machine with clear constraints. Reporting on the deployment notes that humanoid robots still face problems common to advanced field robotics: they are heavy, expensive, require regular charging, and may lose balance or fail in harsh terrain and weather. A battlefield is not a polished demonstration floor. Mud, debris, water, damaged infrastructure, signal interference, and unpredictable obstacles can all punish machines in ways that labs cannot fully replicate.

There are also cybersecurity concerns. A captured robot could potentially reveal hardware, software, communications data, or operational patterns. If communications links are compromised, an adversary may be able to interfere with control, intercept sensitive information, or use the device for intelligence gathering. In conventional systems, this is already a concern. With a humanoid platform designed for closer integration into frontline activity, the stakes may be even higher.

Then there is the question of artificial intelligence itself. Even the most advanced models remain imperfect in fast moving, ambiguous environments. Battlefields are full of incomplete information, decoys, civilian presence, damaged infrastructure, and split second changes. Any system that interprets conditions incorrectly could cause errors with serious consequences. This is why military and corporate discussions continue to emphasize limits, supervision, and staged deployment rather than immediate autonomy in lethal operations.

Human Control Is Still the Official Red Line

For now, both Foundation and broader defense policy discussions still maintain an important safeguard: humans must remain involved in lethal decision making. Reporting on the Phantom program says that although the company’s long term vision includes building a machine that could handle weapons a human uses, current deployment in Ukraine is limited to reconnaissance. More broadly, public statements from major AI and defense stakeholders continue to stress that autonomous weapons should not independently make kill decisions where law or policy requires human control.

That principle, often described as keeping a human in the loop, is central to the current ethical boundary around military AI. It means the machine may assist with movement, sensing, targeting information, or mission support, but a human operator or commander must authorize lethal action. In theory, this preserves accountability and slows the slide toward fully autonomous warfare. In practice, critics worry that once machines become integrated into combat systems, the pressure to expand their autonomy may grow.

This tension is one of the most important parts of the story. The issue is not simply whether a humanoid robot can walk, carry equipment, or scan a trench line. It is whether battlefield adoption of such systems gradually normalizes a future in which human beings rely more and more on machine judgment in matters of life and death. Ukraine’s test deployment does not answer that question, but it certainly brings it closer.

The Next Model Is Already Coming

Even as the Phantom MK-1 undergoes testing, Foundation is reportedly looking ahead to the Phantom MK-2, expected in April 2026. According to coverage of the program, the next version is supposed to include waterproofing, improved electronics, larger battery capacity, and the ability to carry loads up to 80 kilograms. Those upgrades directly address some of the weaknesses associated with the current model, particularly endurance, resilience, and practical utility in rough conditions.

That rapid iteration reflects how defense technology is evolving in wartime. Instead of waiting years between versions, companies now watch how systems behave in field conditions, then quickly redesign around what fails. Ukraine’s battlefield has become one of the clearest examples of that cycle. Drones, electronic warfare tools, ground systems, and now humanoid platforms are being pushed into a loop of deployment, feedback, redesign, and redeployment.

If that cycle continues, humanoid systems could improve faster than skeptics expect. But improvement does not automatically settle the larger questions. A stronger battery and better waterproofing make a robot more useful. They do not resolve the political, legal, and moral debate over how much responsibility warfare should hand to machines.

What This Could Mean for the Future of War

The arrival of the Phantom MK-1 in Ukraine is still only a test, and it would be a mistake to overstate what two robots can do. Yet symbolic first steps matter. The first drones on battlefields were once limited experiments. The first robotic logistics systems were once novelties. Over time, many of those tools became normal. Humanoid military robots may follow the same path, especially if armies conclude that machines can handle reconnaissance, casualty evacuation, resupply, and other hazardous tasks more safely than humans.

At the same time, this story lands in a world already uneasy about AI, surveillance, and autonomy in warfare. A robot shaped like a person intensifies that discomfort because it blurs emotional and operational boundaries. It suggests a future where the battlefield is populated not only by drones overhead and vehicles on tracks, but by upright machines moving through ruins alongside human troops. That image may be technologically premature, but it no longer feels impossible.

What Ukraine is testing now may ultimately prove impractical, too costly, or too fragile. Or it may turn out to be the beginning of a major shift in military doctrine. Either way, the deployment of humanoid robots into an active war zone marks a genuine threshold moment. It shows that the question is no longer whether someone will try to bring humanoid robots into war. That has already happened. The real question now is how far militaries, engineers, and governments are willing to take it.