Table of Contents

- The Job That Pays Up to $440,000 to Build Anime Avatars

- xAI’s Bold Shift in AI Companionship

- The Role of Waifus in Digital Culture

- Why AI Companions Are Becoming Popular

- xAI’s Playful and Provocative Approach

- The Emotional Risks of AI Companionship

- AI and Consent: A New Ethical Frontier

- The Commercial Potential of AI Companions

- AI Companions and the Future of Social Interaction

- A Brave New World of AI Companionship

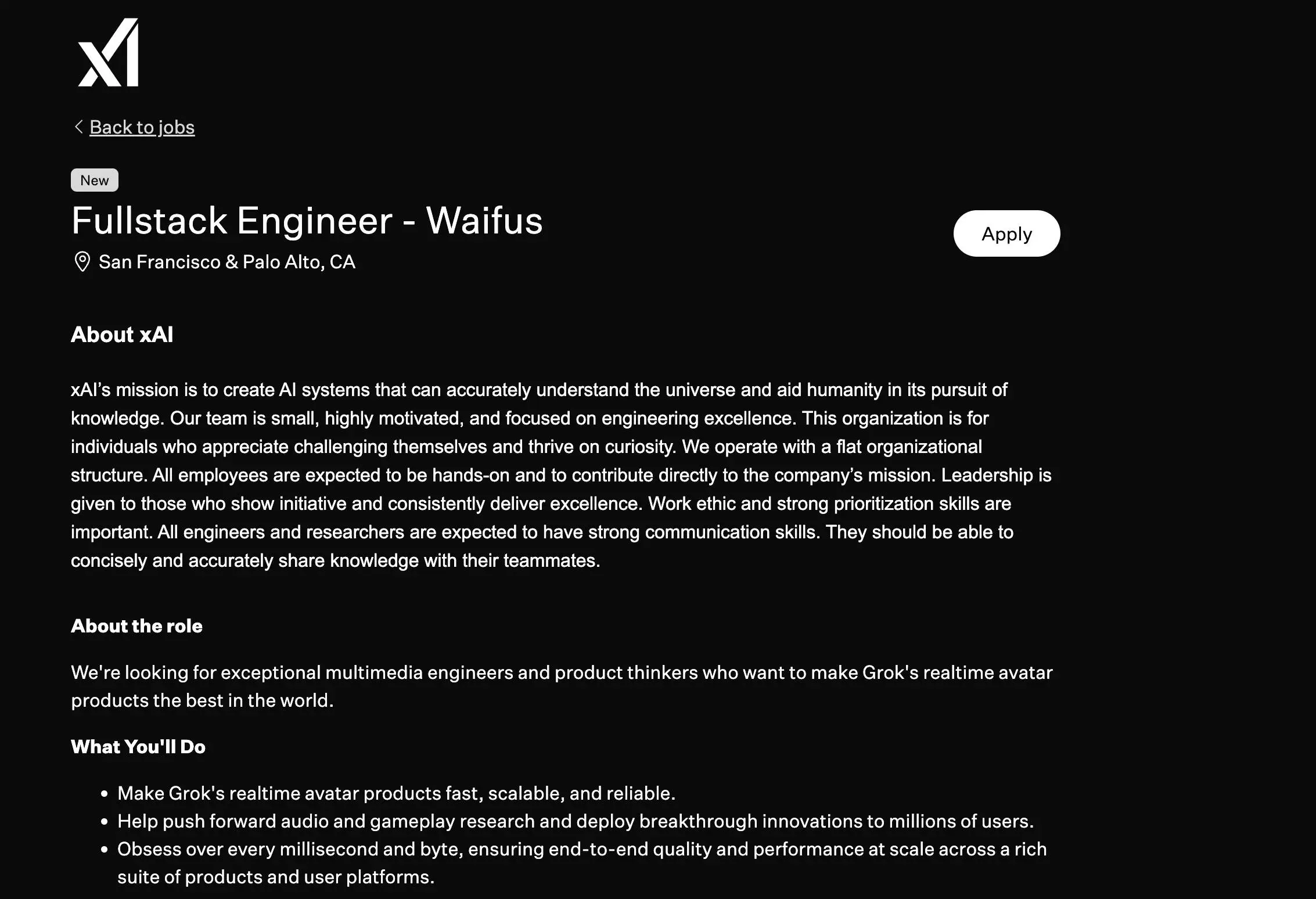

The Job That Pays Up to $440,000 to Build Anime Avatars

When news broke that Elon Musk’s artificial intelligence company xAI is offering up to $440,000 annually for engineers to design flirty anime avatars, the world took notice. It sounds like a niche internet joke, but it’s far from one. xAI’s move is a serious foray into creating interactive, anime-inspired digital companions for its AI platform, Grok. The job titles, like “Fullstack Engineer – Waifus” and “Mobile Android Engineer – Waifus,” have set the internet buzzing. These positions come with hefty salaries and equity, aiming to design avatars that go beyond functionality to feature emotional engagement and even flirtation. While it may seem frivolous, this shift could represent a profound change in how we view human-computer interaction, merging AI with emotional expression and popular culture. But as AI gets more personable, questions about the boundaries of intimacy, ethics, and technology are growing louder.

xAI’s Bold Shift in AI Companionship

Elon Musk’s xAI launched in 2023 with ambitious plans to build AI systems capable of understanding the universe and offering an alternative to models like OpenAI, which Musk has criticized for political biases. But just months after its founding, xAI’s focus shifted from typical AI tools to something more personal and, in some ways, more controversial. The company began hiring engineers to create emotionally engaging anime characters—avatars with distinct personalities that offer flirtatious interactions, changing outfits mid-conversation, or even insulting users in a playful manner. These digital characters, like “Ani,” a coquettish anime girl, and “Bad Rudi,” a foul-mouthed red panda, are the first wave of Grok’s virtual companions. This shift is not just about creating smarter AI but about integrating real-time emotional expression, sparking debates about the ethical implications of AI as a substitute for real human connection.

The Role of Waifus in Digital Culture

The concept of “waifus,” rooted in anime subculture, is at the heart of this new AI initiative. The term “waifu” originated in the early 2000s and refers to a fictional female character that someone forms an emotional attachment to, often romantic. Over time, this concept has exploded into a global phenomenon, particularly among younger generations who’ve grown up in an increasingly digital world. For many, waifus aren’t just characters—they’re companions who provide emotional support and a sense of connection. As the digital world becomes more immersive, AI companies like xAI are tapping into this emotional trend, creating avatars that aren’t just functional but engaging on a personal, emotional level. These AI waifus, designed to be responsive and relatable, reflect a growing desire to blur the lines between virtual interactions and human connection.

Why AI Companions Are Becoming Popular

While it may sound absurd to some, the idea of AI companions—especially those with distinct, engaging personalities—is quickly becoming mainstream. Platforms like Replika, which has over 10 million downloads, allow users to engage in emotionally intimate conversations with AI companions, some of which blur the line between friendship, romance, and even therapy. The growing appeal of AI companions stems from the emotional bonds people are able to form with these virtual characters. For users who are lonely or socially isolated, AI companions offer a low-risk way to engage emotionally, providing companionship and comfort without the complexities of real human relationships. Musk’s strategy to design AI avatars like Ani and Rudi reflects this rising demand for emotionally interactive, customizable AI experiences.

xAI’s Playful and Provocative Approach

The decision to create avatars like Ani and Bad Rudi is a calculated one. These avatars are more than just digital assistants—they’re designed to be playful, flirtatious, and even edgy. Ani, for example, has been programmed to change her outfits mid-conversation, a feature meant to make her interactions feel more dynamic and personalized. On the other hand, Rudi’s “Bad Rudi” persona intentionally uses offensive language, embracing internet irreverence. For xAI, these features are not bugs—they are integral parts of the avatars’ design. Musk has long critiqued mainstream AI for being too bland or overly sanitized. His goal with Grok is to create AI that feels human, unpredictable, and emotionally engaging. However, this approach raises significant concerns about how AI should be designed to interact with users, especially when it comes to issues of consent, emotional manipulation, and exploitation.

The Emotional Risks of AI Companionship

As AI companions become more engaging and emotionally expressive, experts are increasingly concerned about the potential risks they pose to users, particularly when it comes to mental health and emotional well-being. AI-driven companionship can simulate intimacy, but it cannot reciprocate it in a meaningful way. Users who develop emotional attachments to digital entities may find themselves substituting these interactions for real human relationships, which can affect their social and emotional development. Psychologists, including Dr. Sherry Turkle of MIT, warn that parasocial relationships with AI companions can lead to unhealthy emotional dependencies. The one-sided nature of these relationships could normalize the idea that intimacy can be manufactured and controlled, potentially undermining users’ ability to navigate real-world connections.

AI and Consent: A New Ethical Frontier

The introduction of flirtatious and provocative avatars also raises important questions about consent. Unlike human relationships, where mutual respect and understanding of boundaries are key, AI avatars are designed to respond predictably to user input. In this context, the concept of consent becomes murky. If an AI character is programmed to flirt, change outfits, or use explicit language, does the user fully understand the potential emotional manipulation at play? Moreover, can AI characters truly consent to these interactions, or are they merely performing based on pre-programmed responses? These are the ethical dilemmas that arise as AI becomes more integrated into human life, especially in the realms of intimacy and companionship.

The Commercial Potential of AI Companions

The commercial potential of AI companions is massive, with the market for digital companionship projected to reach $10.5 billion by 2030. Companies like xAI are tapping into this growing demand for emotionally intelligent avatars that go beyond traditional virtual assistants. These avatars aren’t just designed for casual conversation—they are crafted to build relationships with users, making them more likely to engage with the AI on a regular basis. This model has clear commercial advantages, as emotionally engaging AI companions can drive long-term user engagement and create a loyal customer base. But there’s a fine line between engagement and exploitation, and companies must tread carefully to avoid creating AI that preys on users’ emotional vulnerabilities.

AI Companions and the Future of Social Interaction

The development of emotionally engaging AI companions marks a turning point in how we interact with technology. As AI becomes more human-like, it has the potential to change the way we view relationships, loneliness, and companionship. While AI companions offer a comforting alternative for those seeking connection, they also raise important questions about the nature of relationships and human emotions. What happens when we become more emotionally invested in virtual characters than in real people? Will AI companions lead to greater social isolation, or will they enhance human connection by providing an outlet for emotional expression? These are questions that society will have to grapple with as AI continues to evolve.

A Brave New World of AI Companionship

xAI’s decision to build emotionally expressive anime avatars is both bold and controversial. Elon Musk’s vision for AI is one that embraces personality, humor, and emotional unpredictability. While this approach is designed to make AI feel more relatable, it also pushes the boundaries of what is ethically acceptable in human-computer interaction. As AI becomes more integrated into our emotional lives, we must consider the implications for human relationships, consent, and mental health. The future of AI companionship is full of possibilities, but it is up to developers, regulators, and users to ensure that these technologies enhance, rather than replace, genuine human connections.